In Defense of Nate Silver

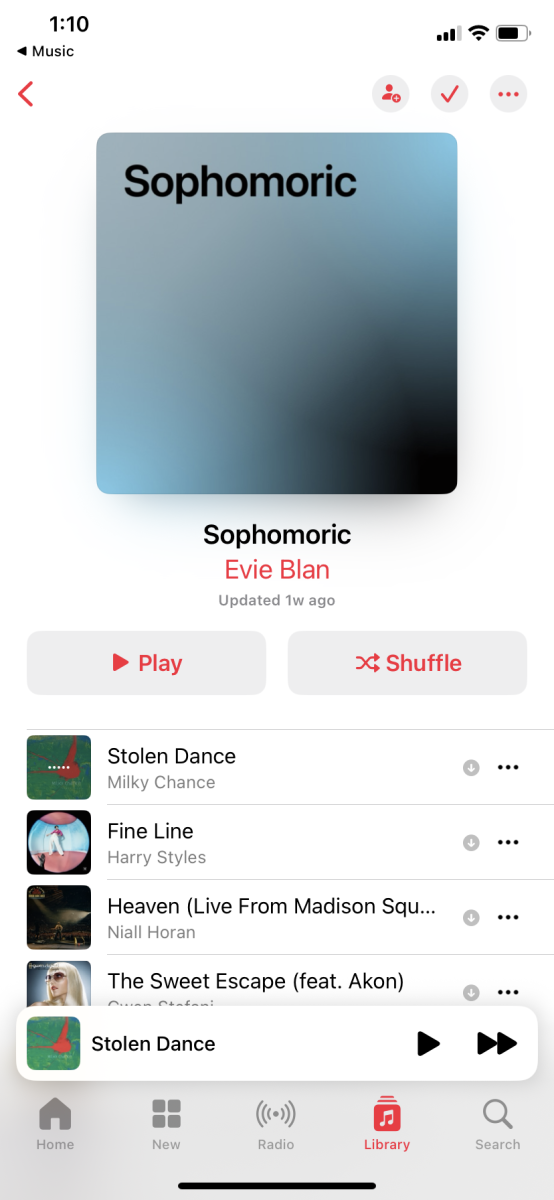

Nate Silver by Jack Martin. licensed under CC 2.0.

Nate Silver, founder and editor in chief of FiveThirtyEight, gives a talk at South by Southwest in 2013.

January 13, 2021

Nate Silver, the grand forecaster of them all. The master statistician who accurately predicted the 2008 election and 49 of 50 states in the 2012 election. Heading into 2016, he had a very high reputation. His correct predictions and also his work developing sabermetrics models for baseball propelled him into high repute. He was named as one of the world’s 100 most influential people by Time Magazine in 2009.

However, Silver and his website FiveThirtyEight have received a lot of backlash over the last two election cycles for his so-called wrong predictions. In 2016, his model projected that Hillary would win by 71%. As we know, Hillary lost and the criticism flooded in. People were accusatory of the inflated numbers that were projected in Hillary’s favor not just across Silver’s prediction but across all other major political projections.

In 2020, Silver gave Joe Biden around a 90% chance of winning the presidency, which was correct, but he was also criticized for misfiring on projections in swing states like Florida, Ohio, Texas, and Pennsylvania that exaggerated Biden’s percentage vote count.

How does Silver’s Model work?

Silver’s model is centered around taking polling data produced by various polling agencies like Fox News, Emerson College, and Quinnipiac University. He weights various polling by these sites based on sample size, polling partner quality, and topicality (how close it is to the present day). Silver’s formula (which has never been revealed) factors in these factors while also adjusting for various extraneous circumstances. For example, due to COVID-19, Silver made it clear his factoring of an uncertainty index which adds an additional embedded assumption that the polls could be wrong. So, Silver jams his numbers into his secret formula and spits out numbers which he turns into predictions into percentage chances of a person winning their seat.

What did Silver get right in 2020?

Silver definitely showed caution compared to some polling that was being spread all across the major media platforms heading into 2020. Major Polling (NBC/WSJ) just days and weeks before the election showed that Biden would win the nationwide vote by 10 percentage points, which in 538’s terms, constitutes a landslide. However, Silver’s model projected that there was a 29% chance that Biden would win the popular vote by more than 10% points as nationwide polls suggested. He also correctly projected the states that would go blue in percentage order, and the electoral college result was within the 80% confidence interval that his polling model suggests (as of now).

Another factor which Silver made more of an emphasis on this year is that a 3% nationwide polling error in his forecast would still lead to a Biden win and that has shown to be true. While the shifts do not necessarily correlate with statewide shifts, they do explain why Biden was given a higher chance of winning the presidency than Clinton was in 2016. Once again, there was definitely a similar polling error, but Biden still won in the end.

So why was Silver wrong in 2020?

I believe there were two factors as to why Silver and his polling material could’ve been off in 2020. I believe the factors were a shift in demographic voting and larger turnout. In demographic voting, we saw a shift in minority voting but especially Latinos voting in favor of Trump. For example in Florida, there was a 10 point swing among Latino voters in favor of Trump most noticeably seen in Miami-Dade county. The impact of the effectiveness of anti-socialist advertising could have had a large impact on polling in certain places. Or it is more likely that polls had issues determining voter turnout.

The US election had a higher turnout as we know, which is ironically a bad thing for polls. Polls usually rely on likely voters and their status of likely votership is determined through a questionnaire that each polling agency poses. However, due to the significant increase in voters this year it would be very difficult to derive a sample proportional to the population of likely voters as there was a bit of a mystery as to who would turn out and vote. It is likely that the net polling aggregate that Silver based his model off of miscounted the vote of new, eligible voters. He was supposed to counter this potential error with an uncertainty index, but it’s clear that the difficulty of gauging voter’s intentions was not good enough across aggregate polling.

What did we get wrong?

The majority of the blame should be placed on us as consumers and media members. ABC (which now owns Silver’s blog FiveThirtyEight) is terrible at relaying statistical information to the public provided by Silver’s projection. Take for example the phrase that Silver guessed 49 out of 50 states correctly. What does that actually mean? Does it just mean that he correctly guessed who would win the state? Does it mean he got very close to projecting what the polls would spit out in regards to percentages? It’s not a very good use of wording by media networks to translate what Silver’s model exactly spits out.

Unsurprisingly, there is a lot of criticism from political pundits and figures directed at Silver for not giving specific candidates odds of being favorites. A massive problem that lots of people immediately see when they look at Silver’s model is that if someone has a higher percentage then that means they’ll win. A percentage is a percentage for a reason. Let’s take the misprediction of 2016 as an example. In 2016, Clinton was given a 71% chance to win while Trump was given a 29% chance to win. Trump’s victory was still very much possible as 3/10 outcomes turned Republican. Does Clinton have more of a chance? Yes, but that doesn’t mean that she’s going to win as events that happen 29% of the time happen 29% of the time. That’s like blaming the weather forecast for being wrong when they say there’s a 30% chance of rain and there’s no rain.

How does this reflect in terms of public perception of polling?

Republican pollster Frank Luntz has stated that the polling industry is done due to the polling inaccuracies. It may be done with but it might be for an entirely different reason. As humans, we desire certainty in the future and especially, we grasp onto things that give us a perception of certainty. So when we grasp something like polling that seemingly provides the illusion of certainty, we grapple onto it with a full embrace. However, polling is just a sample of population, and there is still a considerable margin of error. It’s just hard to get hundreds of millions of people to understand this sort of stuff and say this is just a prediction and it may not happen. And considering the offshoot of polling in 2016 and 2020, it will be hard to get positive public perception of polling to return.

What does Silver need to adjust?

It’s hard to say, as some experts would say, 2020 was especially hard to calculate given the uncertainty of voting measures, rise in number of mail-in ballots, and the threat of foreign interference. Sure there could be many tactics that polling agencies could change for the upcoming elections especially with new data on the demographics of voters that voted for the first time in a long time. Of course there will be more challenges. Will voter turnout remain at 2020 levels over the next few cycles or will it retract in 2024? There are likely reasons that such a massive amount of new voters voted in 2020 compared to 2024. It could’ve been the mass layoffs that gave more people the flexibility to vote. It could have been a stronger emotional desire to vote due to ever increasing tensions in an emotionally unsettling pandemic. There are lots of factors that Silver has to determine by 2024, and it won’t be easy to determine whether the polls will get a grip of themselves in 2024.

Conclusion

Nate Silver’s predictions in 2020 were slightly off but given the ongoing situations that complicated polling this year, there are reasons for why his model did not exactly smash it out of the park. 2024 (and even the midterms in 2022) will be a new challenge as pollsters again have to try to guess turnout and results in various states across the country especially given brand new data from 2020. It will be an interesting few years for the methodologists to try to tune their algorithms to get closer to a more accurate election projection.